Models & Providers

This page explains how Fluent organizes models and providers, and how to choose a practical setup.

In Fluent:

- A Model is the AI itself

- A Provider is the service or runtime that serves that model

Fluent supports cloud providers, local models, Apple Intelligence, and custom OpenAI-compatible providers. You can keep several providers configured at the same time and switch between them when needed.

Supported Providers

Fluent supports these providers natively:

- OpenAI

- Anthropic

- Grok

- Mistral

- Perplexity

- OpenRouter

- NanoGPT

- Straico

- Apple Intelligence

- Local (MLX)

Fluent also supports custom OpenAI-compatible providers such as Ollama, LM Studio, Open WebUI, Groq, Cerebras, DeepSeek, Qwen, Poe, and similar endpoints.

Fluent can expose more than 500 models across these sources.

Cloud

Cloud providers are the simplest place to start.

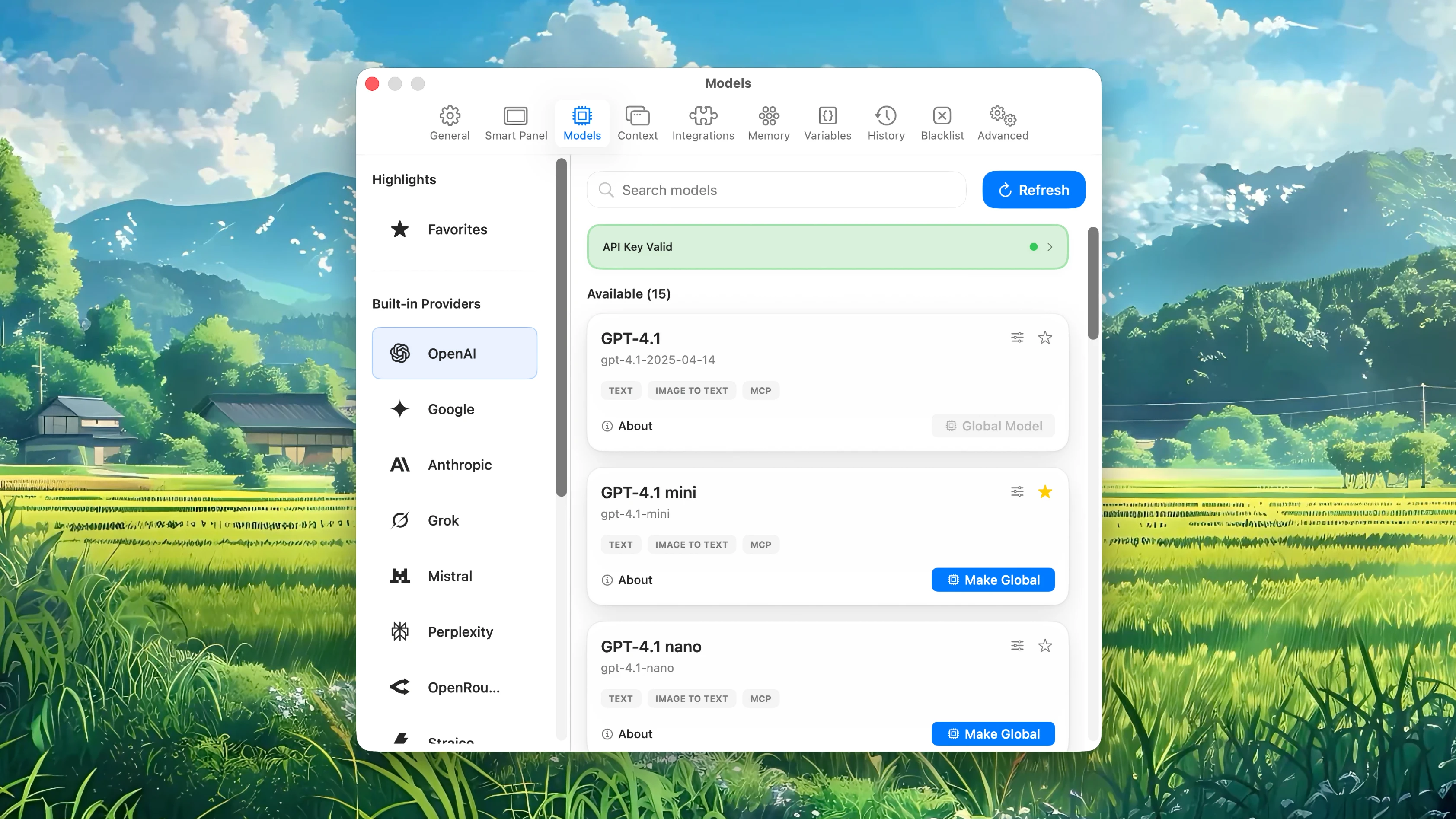

Open Settings → Models, add the API key for the provider you want, then choose a model. Fluent highlights recommended models first.

Practical starting points:

- OpenAI for a straightforward general-purpose setup

- Google if you prefer Gemini models

- OpenRouter if you want one key with a large multi-provider catalog

Use the other native providers when you already know you want those model families.

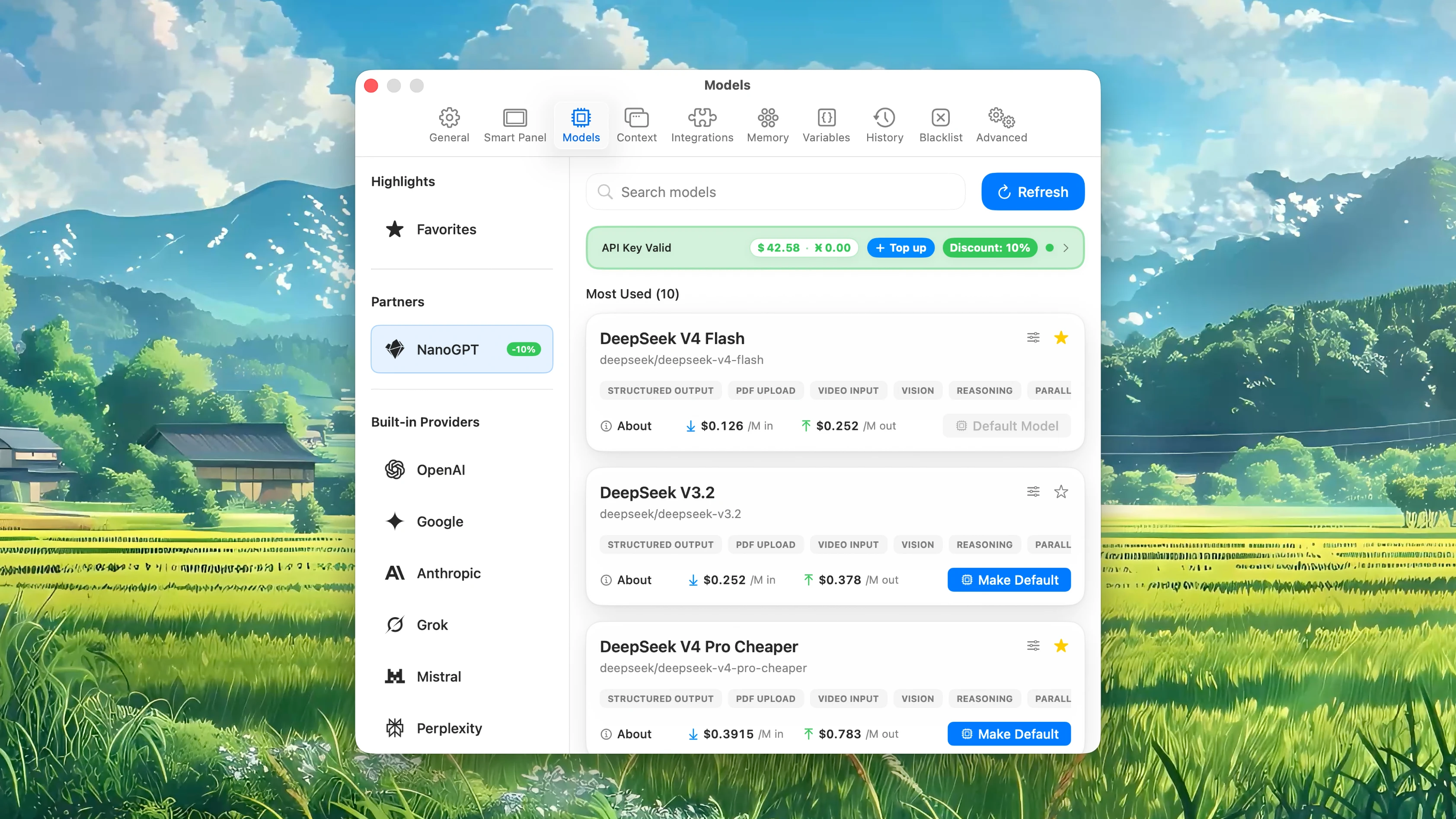

NanoGPT

NanoGPT is a privacy-focused AI gateway — much like Fluent itself. A single connection opens up hundreds of providers and models, with zero commission on top-ups, so what you pay is what you actually spend.

NanoGPT is a native built-in provider in Fluent. To use it, create a NanoGPT account, then add your API key in Settings → Models.

As an official NanoGPT partner, Fluent shows a Get 10% Discount link in the NanoGPT API key section (available once you have purchased Fluent). Opening it:

- Takes you to NanoGPT and automatically generates an API key set up for Fluent

- Applies an exclusive 10% token discount to that key — on every model, including the latest models like Opus 4.8 and GPT-5.5

- Credits a one-time $5–$10 gift for new customers who purchased Fluent between June 1 and July 31

A NanoGPT account is required. Once the generated key is in Settings → Models, you can use any model right away.

Local

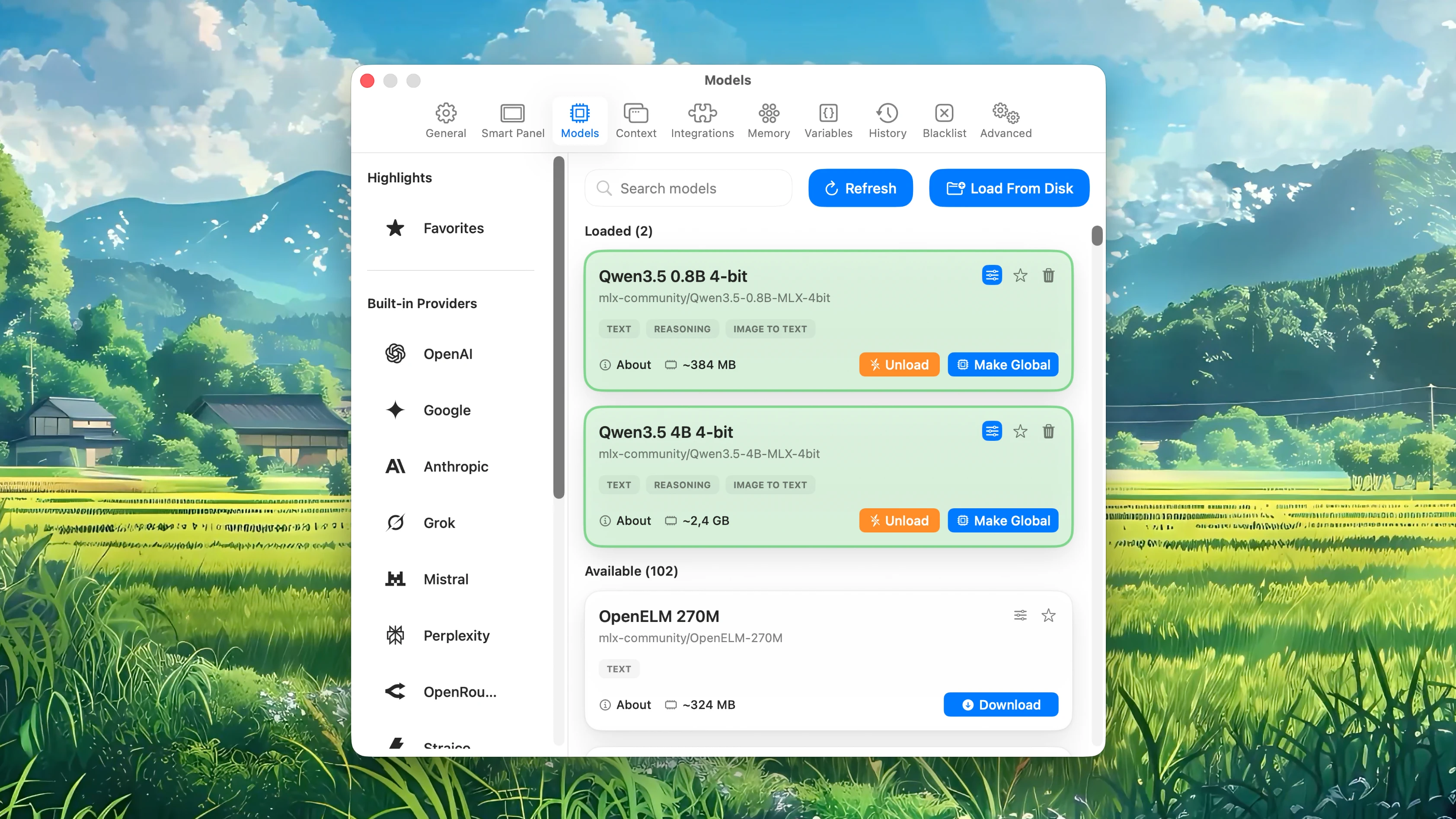

Local (MLX) is Fluent's native on-device model path on Apple Silicon.

Use it when you want:

- On-device execution

- Lower cloud usage

- Better privacy for certain tasks

- Offline-capable local workflows

Fluent includes a large supplied MLX catalog that you can browse and download. You can also load a local model folder from disk.

Apple Intelligence

Apple Intelligence is another on-device option.

If it is available and enabled on your Mac, Fluent can use it directly. No provider API key is required.

Use it when you want a lightweight on-device path for simple tasks, or when you want to keep a private fallback available alongside cloud and MLX models.

Custom Providers

Use a custom provider when your model setup already exists somewhere else.

Open Settings → Models, add a custom OpenAI-compatible provider, enter the base URL, add the API key if needed, and let Fluent fetch the model list.

This is useful for:

- Ollama

- LM Studio

- Open WebUI

- Internal gateways

- Unusual providers Fluent does not support natively

API keys are stored in the macOS Keychain.

Choosing A Setup

Recommended starting points:

- If you want the shortest setup, start with OpenAI or Google

- If you want the widest catalog, use OpenRouter

- If you want one key with a large catalog plus an exclusive Fluent discount, use NanoGPT

- If privacy matters most, use Local (MLX) or Apple Intelligence

- If you already use Ollama or LM Studio, add them as Custom Providers

You do not need one provider for every task on day one. One good default is enough.

Default Model

Fluent uses a global default model for most requests.

Choose a model that is stable and fast enough for your everyday work. Then use per-action overrides only where a specific action needs a different model.

Typical pattern:

- One faster default model for everyday writing and editing

- One stronger model for research or complex prompts

- One local model for private notes or on-device work

This is usually easier than switching the whole app to a different model every time.

Favorites

If you switch models often, mark the useful ones as favorites.

Favorites reduce the amount of scrolling in model menus and make quick switching more practical inside the Smart Panel.

For most people, a small set is enough:

- One or two cloud favorites

- One local favorite

- One model reserved for a heavier task type